Winter 2024 Course Review: EECS 482 (4 credit)¶

2024-04-30

Course Title: Introduction to Operating Systems

Rating: 4/5

Instructor (Brian Noble)¶

Pros:

- Talks clearly

- Has a schedule

- Lots of interesting stories so you learn something even if you fail 482 (see Trivia

Cons:

- Practically none?

What did I learn?¶

Course topics:

- Threads and concurrency

- Mutexes & condition variables

- Semaphores

- CPU scheduling

- Virtual memory

- Address spaces

- Page tables

- Page replacement (swapping)

- Filesystems

- inodes

- Consistency after crashing

- Network

- Sockets

- Client-server model

- Distributed systems

- Miscellaneous

- RAID

- LFS (log-structured filesystem)

Brian constantly brought up the five tools of computer science:

- Caching

- Hashing

- Indirection

- Replication

- Encryption

482 discusses 1 and 3 in great detail, 2 and 4 occasionally. The philosophical takeaway is:

- Whenever you have something to protect, add a layer of indirection (e.g. using virtual addresses for user programs)

- If there's too much data to copy, also use indirection

- It can't be indirection all the way down; there must be something fixed by convention (e.g. the first sector being the bootloader)

- If indirection gets slow, add caching

- If you want consistency, use replication (e.g. RAID with redundancy)

The course is all about abstraction:

- Threads are abstractions for CPUs

- Virtual memory is an abstraction for physical memory

- Filesystems are abstractions for disks

- Sockets are abstractions for network interfaces

Part of abstraction design is to provide user programs with the illusion that resource is infinite. A process can spawn thousands of threads on an 8-core machine, and they take turns to execute, thanks to the OS scheduler. Of course, at some point the context switching overhead overshadows the benefits of parallelism, but you could.

Another job of the OS is to protect a program from other programs. You can't trust user programs. Therefore you must build a layer of abstraction so that the user program can never "control" the machine. The three things a user program is allowed to do:

- Touch its own property, e.g. address space, open files

- Touch property that another process explicitly shared with it

- Transfer control back to the OS with a syscall

The point is that the OS is an absolute tyrant. A user program can never run for as long as it likes, nor can it touch anything other than what it's explicitly allowed to. To achieve this, the OS conspires with the hardware so that it can intervene when certain things happen:

- Every few milliseconds, the timer interrupt fires, transferring control over the CPU to the OS

- The program issues a load/store marked unreadable/unwritable, and the MMU triggers a page fault, also handled by the OS

Although the timer and the MMU are technically part of hardware, they always hand over control to the OS. They're part of the abstraction. Without the timer, it would be up to a user program to give up the CPU, which makes it really easy to freeze a machine. Without the MMU, user programs would use direct addressing, enabling anyone to tamper with anyone's memory.

However, the OS can bypass either restriction, and it is the only one allowed to do so. When a timer interrupt fires and hands control over to the OS, the first thing the OS does is to disable the interrupt, so the OS itself gets to run for as long as it likes. Once its job is done, it re-enables the interrupt, and hands control back to a user program (may not be the same one). We don't give user programs the ability to control interrupts; not MMU either.

We just discussed the first half of the course. The second half was less mind-bending and more optimization trickery with "make the common case fast" heuristics. However we did make a big deal about consistency.

The disk only guarantees block writes are atomic. Therefore, if you need to write two blocks to disk and you lose power halfway, you could have written zero, one, or two blocks. That basically means you just lost two blocks of data, because you're unsure either block is the right version. Worst case is you end up breaking essential metadata, which corrupts the entire filesystem.

We can play clever tricks like careful ordering of block writes so the last block we write "commits" the changes. But when this is not possible, due to cyclical dependencies, we came up with the notion of journaling, which doubles the cost of writes because we always keep a copy of the data we're writing in the journal until we're sure these data made it where they belong. Where is the journal? On disk. At this point I was convinced that disks just suck. We're paying 100% overhead just to handle the 0.001% chance that the machine crashes. But to deliver the "persistent storage" promise, we have to pay that cost.

The disk is just a big pool of zeroes and ones — and as such there is no way to distinguish a block of metadata and a block of data. You have to allocate a static location as the "boss of metadata"; for example, writing the root inode to block 0, from which we can discover every descendent, as we did in project 4.

Personal reflections¶

The reason why I enrolled in the course is all my friends did it. It was taught by Manuel, one of the "signature" professors we had at JI, Shanghai. It's one of the most challenging courses there ever was, so being the arrogant boy, I took it. Peer pressure except it was me who pressured myself into taking it.

The most mind-blowing chapter, also the one I once had the most incorrect understanding about, is virtual memory.

Rewind to 2021, when I was taking VG151, intro to CS. I had no idea what

virtual memory was, and was amazed that malloc always handed me roughly

the same address every time, beginning with 0x7fff or something, as if

that part of the RAM was always free. I had mistakenly thought that all

processes share one big heap on the physical memory, and malloc had

a global table of free chunks. It does not. Each process has its own

address space, and the user program does not know, nor does it care, where

it lives on physical memory.

The MMU also explains a gap in my brain. Prior to this course, I thought the OS would translate memory addresses of a program before it ran (which is not possible because you can dynamically allocate memory). Now it became clear that there is a dedicated piece of hardware that does the translation.

Projects¶

Project 1 is solo, 2-4 are done in a group of two or three; mine was three. We were enrolled in the 4-credit version of 482, which means we're only required to do the core version, which is supposedly less work.

We met Mondays and/or Wednesday mornings at 10 AM at the Duderstadt. It was a great excuse to spend money on muffins and coffee at the cafe. Nearly every time we sat at the same table with a giant screen. There was an HDMI cable but it doesn't work. I ended up opening a Zoom meeting.

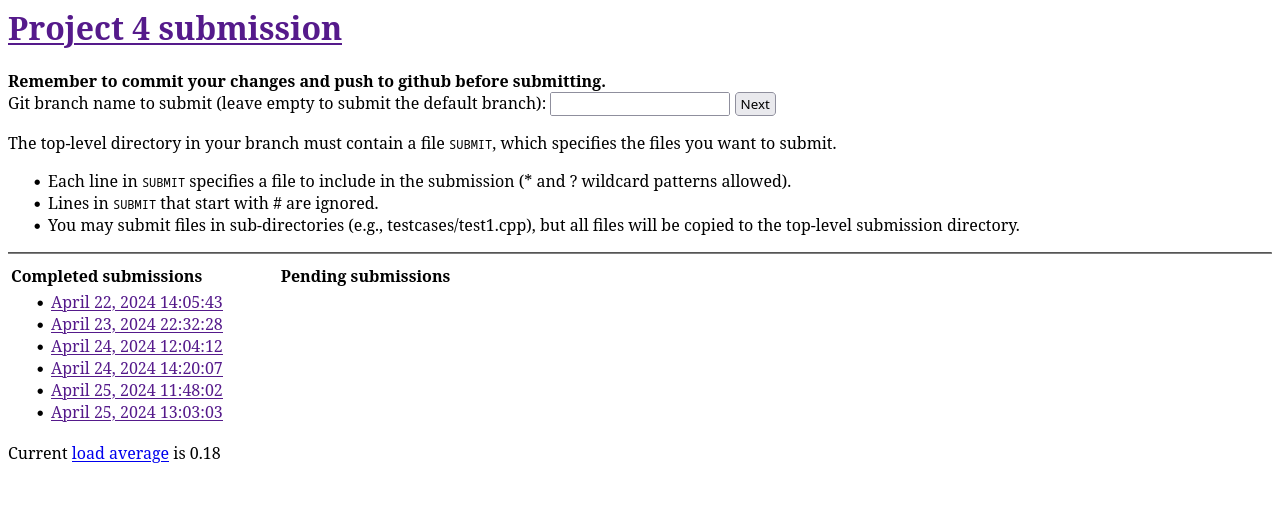

Of the three autograders I've used at UMich, this is the least visually interesting one, besides only allowing one submit per day with feedback, plus three bonus submissions for each project.

It does fit in the brutalist aesthetic of the website.

Project 1: Multithreaded disk scheduler¶

Given mutexes and condition variables, build a disk scheduler.

Not that hard, took me a few tries to get used to the paradigm of signaling after work is done.

Project 2: Thread library¶

We built our own threads, mutexes, and condition variables. We also get to decide what happens in a timer interrupt.

The hardest part was taking care of all the ucontext_t objects, which

can't be moved because they contain pointers to themselves. We had trouble

keeping track of which stacks and which ucontext_ts we could delete,

because the lifetime of the thread object is unrelated to that of the

thread's execution flow. We ended up playing pointer tricks, which is

basically shared pointers with extra steps.

Here is a snippet of code I wrote at 2024-02-20 00:30:

void thread::delete_context(ucontext_t *context) {

auto it = cpu::self()->stacks.find(context);

assert(it != cpu::self()->stacks.end());

// delete stack of thread that previously died

// like burying your sibling's bones

// pour one out for the binary spirits

// freed from deallocated homes

delete[] cpu::self()->stack_to_delete;

// we cannot simply delete the stack

// of the thread that is running

// for a ghastly segfault taketh us aback

// with a coredump equally alarming

cpu::self()->stack_to_delete = it->second;

cpu::self()->stacks.erase(it);

delete context;

}

Another design mistake is how we used the ucontext_t pointer in the

mutex as a unique identifier for threads. It turns out the instructors

were so cunning that they managed to make our library allocate

a ucontext_t exactly where one was just deleted, tricking our library

into thinking the new thread owned the mutex. I still have a hard time

believing it was possible. They probably overloaded operator new on

a modded version of ucontext_t.

I was very involved in project 2; I wrote more than half of the code, and thus knew it like the back of my hand.

Project 3¶

Virtual memory pager. Manages memory address translation, paging, and swapping. Lots of data structures needed, even a state diagram.

I grossly underestimated the complexity. The first time I read the specs, I was like, "pfft! single level page tables?" But it was the hardest project for me.

Unlike project 2, project 3 had much less effort from me. All I did was draw the state diagram and propose the data structures and their names. Then I got consumed by 373 and the workload shifted to Samuel who basically did it all on his own. Therefore I don't understand it as thoroughly as project 2. By the end of project 3 I was completely lost.

The data structures were a complete mess. Here is a list of structs we defined:

shadow_table_entry_tfilename_block_tpage_occupant_tfile_occupant_tswap_occupant_tpage_status_t

which are distinct and redundant in a chaotic way. It's a miracle we passed all the test cases.

The lack of involvement resulted in a relatively poor understanding of the pager, so I devoted more energy to project 4.

Project 4¶

Network filesystem. Listens on a TCP port and handles concurrent read/write/create/delete requests from clients.

I think I did a pretty good job at factoring out just the redundant code while handling special cases in respective functions. Fortunately we just have four request types (endpoints) to handle, so a little redundancy is ok.

My least favorite part of this project is how strictly we had to enforce

the one request string format. The request string is delimited with one

space. No leading or trailing whitespace. All this while claiming that

parsing is "is amenable for processing by istringstream (e.g.,

operator>>)". My sibling in heaven, istringstream is not the one you

want if whitespace is significant. I ended up using regexes, because it's

preferable to having to read one character at a time while constantly

checking whether you've exhausted the string stream. I regret nothing.

Project 4 was the first time I actually used RAII in a productive way. Because every filesystem access is a traversal down a tree, and we want to prevent race conditions, every descent requires that we grab a lock and release a lock. Some are reader locks and some are writer locks. This is cumbersome to manage manually, so I wrote an RAII wrapper that keeps track of what locks we are currently holding and what type of locks they are. At the end of the object lifetime, the destructor simply goes over the list and unlocks them one by one. If we call a function that needs to lock/unlock, we simply pass the wrapper by reference. Easy!

I'm not sure if this is healthy, but I cringe whenever someone optimizes

code to "fit the scenario at hand". In the early stage of the project,

I wrote a function called alloc_block(), which takes void and returns

the block number just taken out of a pool. Later we realized that if an

operation needs two allocs, but failed in between, then the first one

would never be freed. We thought it would be better if we could atomically

alloc two blocks, so I suggested my teammate to generalize the function to

take one optional parameter and return potentially more than one block.

When the function was reimplemented, I found that it was not quite what I meant. I was thinking something along the lines of

std::vector<uint32_t> alloc_block(uint32_t count = 1);

What it ended up being was

std::pair<uint32_t, uint32_t> alloc_block(uint32_t count = 1);

which returns the allocated block # in the second element of the pair if

count == 1 (because it kinda fits our semantics better), and returns two

valid block #s if count != 1.

I feel uncomfortable that count did not function as advertised; it

behaved more like a bool. But since we're using our late days (it's the

first time I ever used a late day) and we're tired, I let it slide.

Verdict¶

Now that I've taken the course, done the projects and sit through the exams, I do not regret taking this course. I do, however, regret taking it with two more workload EECS courses, one of which (373) is also heavy-workload.

I would recommend Brian Noble to anyone, no questions asked. He is my GOAT professor of the semester. The slides follow the motivation-solution-problem-remedy train, which tightly model the actual evolution of real systems in the e.g. 1980s. It's interesting to know what came before modern systems and what Linus Torvalds et al. needed to take care of when they crafted the revolutionary kernel.

Trivia¶

Brian always has stories to tell. My favorite story is how when he was young, his advisor talked to him about his unnecessarily complex wording in his paper. I personally do believe the doctrine, "why many word when few word do trick".

Later on a lecture on log-based filesystems, he had a callback to the story. He goes (paraphrased):

What I lament about project 2 is, the right way to identify who owns the mutex is to add something to your data structure. But you never do that. What you do is you add another map. So what you end up with a lot of suprooflo- su- sur- (waves hand) I can't say it. Uhh… extra. My advisor would be happy about that. Extra maps.